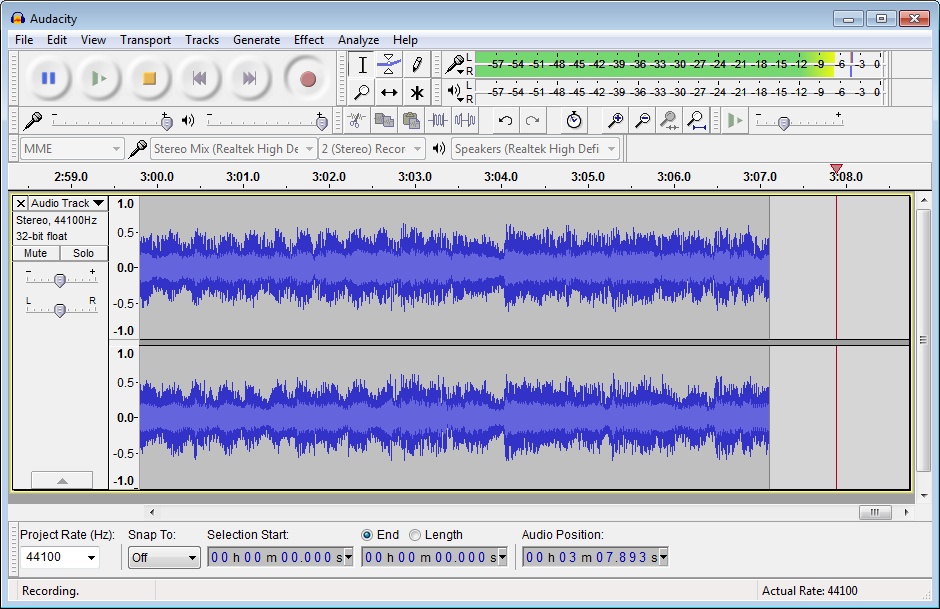

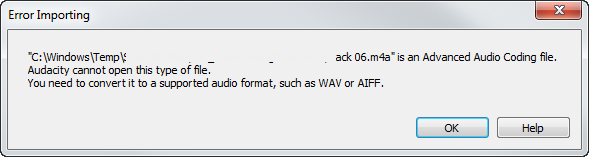

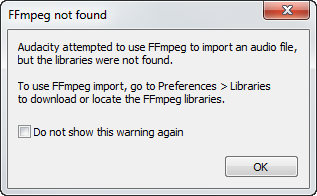

Audacity attempted to use ffmpeg. Audacity attempted to use FFmpeg to import an audio file, but the libraries were 2019-01-15

FFmpeg (64bit) for Windows

After installing and building the project, unable to import mobile-ffmpeg-full for my project. The goal here is to encode with hardware acceleration to have reduced latency and cpu usage. However i'm pretty sure i've eliminated the transcoding part of the command which is what i need to work in the first place. I don't know if this has to do with the codec that I am using, I don't know. Optionally, a pal8 16-color video stream can be exported with or without printed metadata. If there are more than 1000 frames then the last image will be overwritten with the remaining frames leaving only the last frame. Popen ffmpeg + arg1 + arg2 + arg3 + arg4.

Zeranoe FFmpeg With Emby Using NVENC

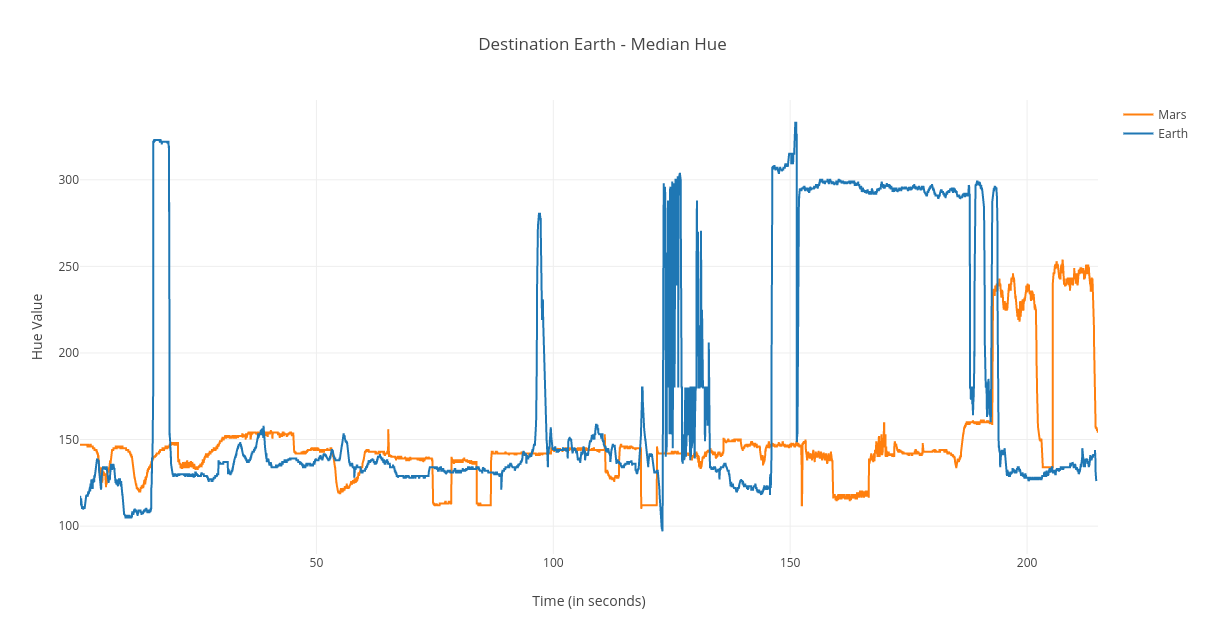

I want to create a horizontal video with adaptive background like the video I've shown. However, I'm having trouble extracting frames from the stream at the server so it can be processed by opencv. I am trying to find the timing a clip within a longer clip using python. The first one is using a Python application which gives me a list of frames that represent scenes. This value must be specified explicitly. If so, I would appreciate if answers could be given as an exact script, since I know very little about ffmpeg scripting and likely wouldn't be able to follow.

FFmpeg (64bit) for Windows

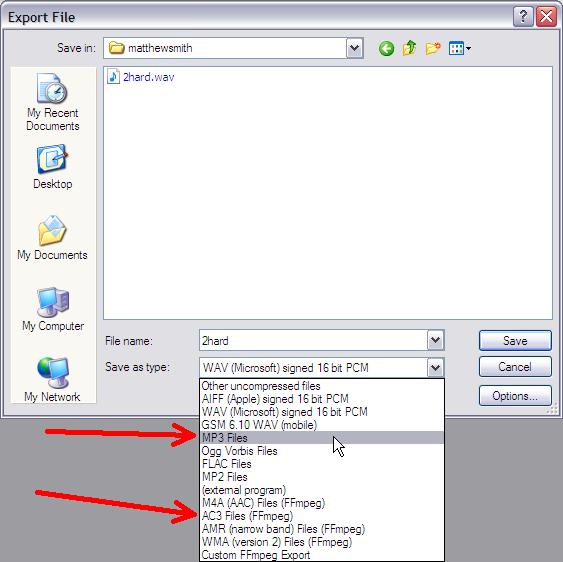

. If I give a value to the 'comments' field using the Window's file properties window, and then run ffprobe on the file again, I can see the comment tag with the. Valid values are 1, 2, and 4 channel layouts. But I don't want to include ffmpeg binary separately as it already contains ffmpeg. The size, the pixel format, and the format of each image must be the same for all the files in the sequence. But its charges are very reasonable indeed. Pay attention to '-f', 'mp3', 'pipe:1' in last example please.

How I obtain videos on my gpx mp4?

I have extracted I and P frames from compressed videos. But I am unable to generate the master playlist with different profiles. When enabled, the logic monitors the flow of segment indexes. After the upgrade, Logitech camera switched from yuv420p to yuyv422 and I lost 30 fps support at 1280x720. How can I align all streams in one time series? Yet when I drop the video into Unity it loads indefinitely and I have to force quit.

How To: free MP3 From a YouTube Video

The individual resolutions are generating fine with their own playlists. This can be useful for laptop users for example who may be using their machines in rooms without immediate access to the telephone handset. Default is the maximum possible duration which means starting a new segment regardless of the elapsed time since the last clock time. I tried this command and it works perfectly: ffmpeg -i video. For one thing it fails to strip prefixes and suffixes from the filename being passed to ffmpeg.

ffmpeg grabbing full screen youtube: first seconds of sound

When I remove packet in random I-frames, both h264 and h265 streams work as expected jumps some seconds but continues streaming. During the experiments I captured the next values: File creation. But I cannot make it work, nor can I get any logs or error information to explain why. I have checked the code but cannot identify where it makes the silence. It seems to work, but occasionally it stops responding and seems to ignore files from that point on. Can ffmpeg parse the file from the position where the video bytes start? I'm assuming there's an oddity with how Swift process works, but even there I've tested and I'm stumped.

Zeranoe FFmpeg With Emby Using NVENC

Seeking is done so that all streams can be presented successfully at In point. Is there any other or faster way to extract videos frame accurately? I know how to make an image sprite with php, but i dont know how to make the screenshots of each video with the time in second. Use -formats to view a combined list of enabled demuxers and muxers. I can see the video without any issue, But I am keep getting below error in console. I have made some searchs and tried different alternatives to try converting opencv image to something that could be ok for ffmpeg+libx264. When I add an image over the video the first scene freezes after about 3 seconds for less than a second, everything works fine after. Any help will be highly appreciated.

www.littleboyblu.com

The quickest way to discover this is to use a neat little util called lsdvd written by Chris Phillips and available from. Most related post I've found were trouble shooting more complex operations past this step. The ProcessBuilder just won't use the right arguments. I would want to tell ffmpeg if dimension of any video lower than 480p do not touch the height or width otherwise do encode and resize it to 480p, here's my command but it always scale up the video if the video lower than 480p ffmpeg -i input. Can we use ffmpeg to convert s3 files and store it back using Django? Again, my problem is overlapping the. Bandwidth is limited, and so the stream must be below 1Mbps.